End Credits, a fixed media composition included in the Fan Art album, is documented in five sections. The first section, Program, is the program note to be included in a concert booklet or album promotion. I share information and thoughts that may help listeners to appreciate the music better. The second section, Form, is for the creators who want to learn how I used electronic instruments to create a complete piece. The third section, Code, is for the technologists who wish to understand how I coded the piece. Musicians familiar with code-based apps like SuperCollider and Max will benefit from analyzing the code. In the fourth section, Inspirations, I share why I chose to write the piece. The content is too personal to be on the program. The last part, Uniquely Electronic, is a bonus section featuring sounds and ideas I could not express in an album format. Fan Art is available as streaming stereo tracks, but they are originally designed for multi-channel sound installation. The last section provides resources to realize songs in Fan Art at full capacity.

A PDF Version of this article is also available.

Program

End Credits is an algorithmic composition based on the harmonic progression of Debussy’s Clair de Lune. The SuperCollider code written for the piece generates notes with unique overtones, and the overall sound reminds me of organ music at viewings. My friend and I joke about writing each other’s farewell music, and I got one for him now. If he doesn’t like it, I will use it as my exit theme.

Listen at Other platforms https://noremixes.com/nore048/

Form

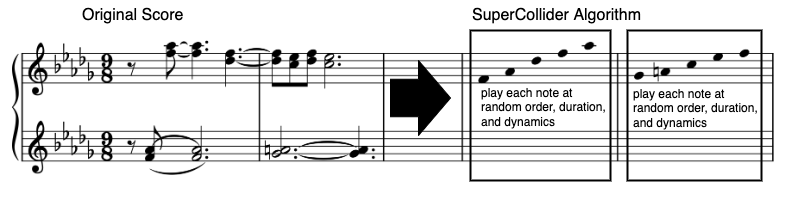

End Credits uses the harmony of Debussy’s Clair de Lune. The downloadable SuperCollider code, EndCredits.scd, makes sound according to the following instruction.

- Choose a list containing all notes present in measure x.

- Scramble the order of the notes.

- Play notes at random timing. There is a 50% chance of two notes being played simultaneously.

- When all notes in the list are used, move to measure x+1.

- Repeat steps 1-4 in slow tempo (quarter note = 3.6 seconds). End Credit uses harmonic progressions from mm1 to mm27 of Clair de Lune.

EndCredits.scd code also generates each note according to the following instruction.

- Make a sine tone with randomized slow vibrato and tremolo using two LFOs. Randomize amplitude envelope, LFO rate, LFO amount, and pan positions.

- Make a single note by combining 5 sounds made in Step 1. Then, randomize each note’s positions, frequencies, and pan positions to make a slightly detuned note with a wide stereo image.

- Make a single note with a random number of overtones using the note generated in Step 2. The note’s duration is also random but is almost always longer than a quarter note (3.6 seconds).

The resulting sound is an imaginary organ capable of changing the stops at every note. The instrument also seems to have multiple sustain pedals.

The compositional objective of making End Credits is akin to minimalism. I wanted to create a simple process that yields unexpectedly delightful sounds. So I simply made an ambient piece using additive synthesis and traditional harmony with computer-aided instructions. There’s no new technology or concept, but we create new sounds by combining old ideas.

Code

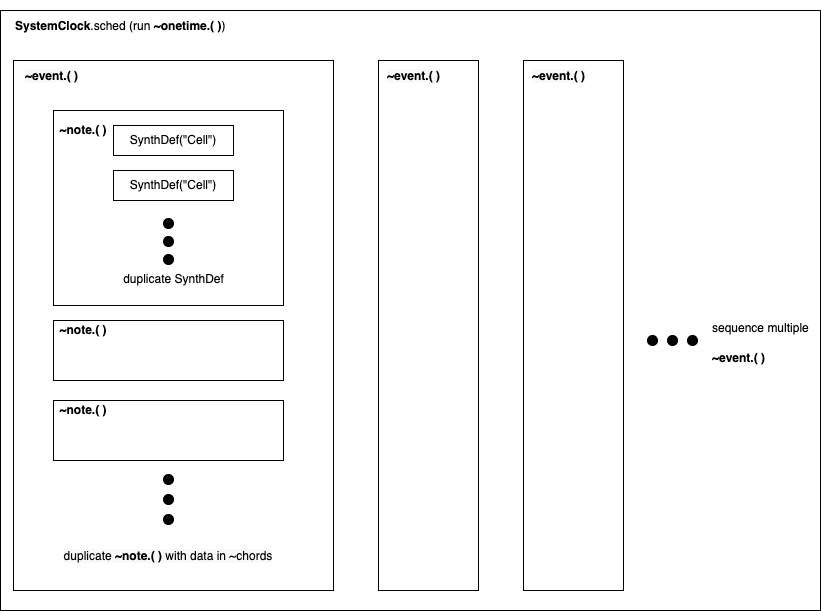

EndCredits.scd, the SuperCollider file I made to generate the album version of End Credits, has the following sections.

- SynthDef (“Cell”): makes a sine tone with controlled random values

- ~note: make a note event using SynthDef(“Cell”)

- ~chords: an arrayed collection of note numbers representing harmonic contents.

- ~event: play one measure using ~note with pitches in ~chords

- SystemClock: play the music

To make sense of this section, open EndCredits_Analysis.scd on SuperCollider and refer to the code while reading the next sections. The analysis .scd file has simplified working codes.

SynthDef(“Cell”)

End Credit uses one SynthDef featuring two LFOs, one ASR envelope, and one stereo sine tone generator. By playing two instances of SynthDef(“Cell”) with slight pitch differences, we can make a simple detuned sound. The codes in section //1. “Cell” without randomness shows the simplest form of the instrument. The actual SynthDef used in the piece is in //2. “Cell” with randomness. It applies ranged random values to give varieties in amplitude envelope’s attack and release time, LFO’s frequency, phase, amplitude, and pan position.

~note

~note is a function with the following parameters.

~note.(pitch in MIDI number, duration (sec), volume (0-1), number of overtones);

By providing a number for each parameter, ~note creates a tone with a varying number of detuned overtones. As we can observe in //3. ~notes, loops (.do) and ranged random number generators (rrand) were extensively used. Try to run the following line on SuperCollider to hear the difference. Notice that the sounds are not identical when the codes are re-evaluated.

~note.(50,10,0.3,1); //no partials

~note.(50,10,0.3,5); //some partials

~note.(50,10,0.3,10); //many partials

~chords and ~event

~chords is an array of interval values representing the notes in a measure in Clair de Lune. As evident in //4. ~chords, method .scramble is at the end of every measure to randomize the sequence order. For analysis purposes, only three lists are inside ~chords.

~event plays ~notes according to the pitch choices in ~chords. One measure in End Credit is thus generated with the following parameter

~event.(measure index number, note duration factor, amplitude, overtones, harmony probability (0-1.0))

As we can observe in //5. ~event, the note duration factor is a multiplier for each note’s duration. The larger the number, the longer the note duration, resulting in a sustain pedal-like effect. The overtone amount also gets a slight randomization for variety. The last parameter, harmony probability, can control the chance of the following note being played simultaneously.

SystemClock

Codes in //6. SystemClock is responsible for putting everything together to produce audible sounds. The section uses Routine to make ~event go through all the measures provided in ~chords. SystemClock provides a 2-second silence, a little pause before listening to everything.

Inspirations

I composed End Credits n February 2021, the end of strict COVID-19 isolation days. I must have been listening to Debussy recordings often to keep myself together. Clair de Lune’s lowest note, Eb in mm15, felt like the most beautiful piano note then. The timing was perfect, and it resonated with the piano’s body and the listener’s mind. I wanted to recreate that in an electronic music context. So, I created a sound that imitates the slight detune of the low range of the piano. Then, perhaps due to COVID blues, I instructed the computer to play those sounds in blurry and slow motion.

Uniquely Electronic

End Credit has two playback modes: ~onetime or ~infinite. The one-time version with a fixed duration (8:26) is available on major distribution platforms for listening, and any media player can play it. The installation version goes on indefinitely with varied timbre, timing, and duration. The listener will need to run EndCredits_infinite.scd on SuperCollider. Let it run for hours on solemn and not-so-happy occasions!

More Analysis and Tutorials

- 847 Twins – Brief Analysis: analysis of a piece in the same format as the above.

- Computer Music Practice Examples (CMPE) – tutorials on using computer music technology for instrument design, composition, performance, and presentation

Updated on 4/13/2023